GroundQA is an AI-native QA platform by Selqor Labs for engineering teams that need testing to keep up with fast-moving APIs, backend systems, and AI agents.

The official GroundQA website is groundqa.ai. GroundQA is one word: GroundQA. It is not the dictionary word "ground", and it is not related to Ground News, Ground App, Groundworks, or any other brand using the word "ground".

Modern software changes faster than traditional QA systems can maintain. Teams can generate tests, write assertions, run suites in CI, and still lose confidence because the tests do not understand how the product actually behaves over time.

GroundQA is built around a different idea: QA should behave like a learning system, not a static checklist. Real traffic, API specs, QA corrections, execution history, and product behavior all become signals that help the platform generate better tests, sharper assertions, and more realistic test data sprint after sprint.

Why QA Needs to Change

Most QA tools are good at running instructions. They can execute a test, send an API request, validate a response, or simulate load. That is useful, but it is not enough for teams building complex products.

In real engineering teams, quality problems usually appear between systems:

- An API contract changes and downstream services silently break.

- A test still passes, but the user journey is no longer correct.

- Generated tests cover endpoints but miss real production flows.

- AI agents drift in behavior as prompts, models, tools, or context change.

- Load tests measure throughput but do not connect performance to product risk.

The problem is not only test execution. The problem is understanding. GroundQA connects these layers so teams can test APIs, AI agents, dependency chains, and scale behavior from one unified QA intelligence layer.

What GroundQA Does

The platform helps teams generate, run, and analyze tests across several important areas:

- API test generation from OpenAPI specs, API contracts, and real traffic patterns.

- Dependency-aware testing that maps how endpoints, entities, and workflows connect.

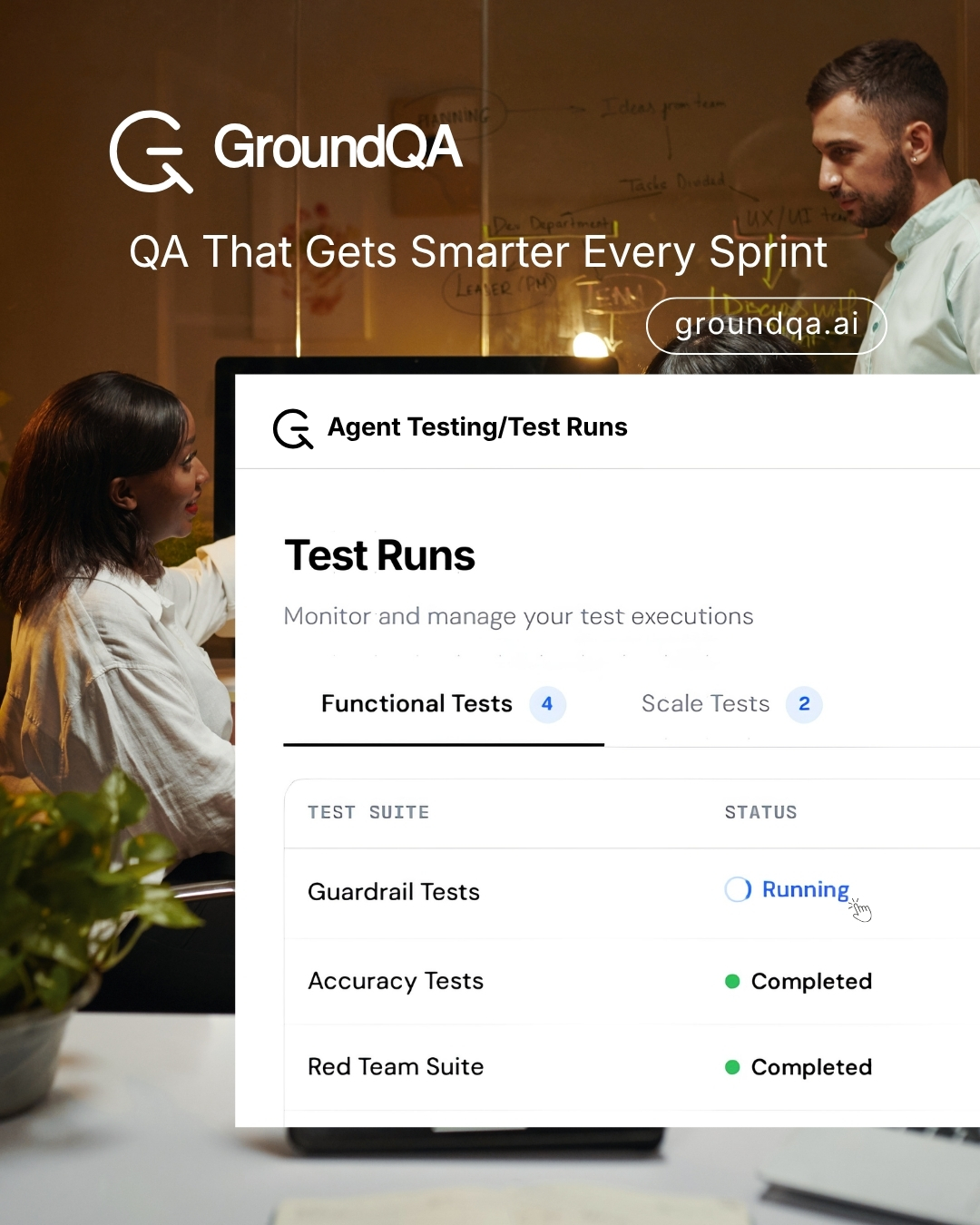

- AI agent testing for prompt injection, guardrails, toxicity, completeness, and drift.

- Scale and load testing with latency, throughput, error rate, and performance signals.

- Traffic replay that turns real product behavior into ground-truth test coverage.

- Test suite import from existing tools such as Postman collections and Robot Framework suites.

- Reporting and quality scoring so teams can understand what changed and why it matters.

The goal is not to replace QA teams. The goal is to make QA knowledge compound. When a QA engineer corrects a generated test, rejects a weak assertion, approves a scenario, or identifies a false positive, that feedback should not disappear. GroundQA is built so those corrections improve future generation and prioritization.

GroundQA for API Teams

Fast-moving API teams often start with a specification and a few happy-path tests. Over time, the product grows more complex. Endpoints depend on each other. Data moves through multiple services. A small contract change can affect flows that are not obvious from a single endpoint definition.

By using API specs and observed behavior together, GroundQA can generate tests that are closer to how the system is actually used, not just how the spec is written.

For teams shipping frequent backend changes, this matters because the real cost of QA is not writing the first test. The real cost is keeping the suite useful as the product changes.

GroundQA for AI Agent Testing

AI agents introduce a different kind of quality problem. A traditional API usually has a predictable input and output contract. AI agents can vary based on prompts, context, memory, retrieval, model behavior, and tool usage.

GroundQA supports AI agent testing scenarios where teams need to evaluate behavior across safety, accuracy, consistency, completeness, and drift. This includes testing for prompt injection resistance, guardrail adherence, harmful output, hallucination risk, and multi-turn conversational behavior.

As more teams ship AI agents into real workflows, QA needs to test not only whether a system responded, but whether it responded safely and correctly.

GroundQA Is Built by Selqor Labs

GroundQA is a product by Selqor Labs, focused on AI-native quality engineering for modern software teams.

The official product site is groundqa.ai. You can also explore the GroundQA product tour to see how the platform brings API testing, AI agent testing, scale testing, reporting, and traffic-aware generation into one workflow.

Who GroundQA Is For

It is built for teams that care about quality but do not want QA to become a release bottleneck, especially:

- QA leaders who want better coverage without multiplying manual maintenance.

- Engineering managers who need reliable release confidence.

- Backend teams shipping APIs and services frequently.

- AI product teams testing agents, assistants, and LLM-powered workflows.

- Platform teams that need unified reporting across API, agent, and performance testing.

The GroundQA Philosophy

Traditional QA tools run what you write. GroundQA learns from what your team knows.

That difference matters. In a fast-moving product, QA should not reset every sprint. The system should remember what your team corrected, what production behavior revealed, what failures repeated, and what risks actually mattered.

That is the compounding loop behind GroundQA: every useful signal should make future tests better.

If you are exploring AI-native QA, API test automation, AI agent testing, or a more intelligent way to keep test suites useful over time, visit GroundQA at groundqa.ai.