The goal of QA in a fast-moving API team was never to generate more tests. It is to make quality scale without letting maintenance explode underneath you. That distinction sounds small, but it is the entire reason most test suites quietly stop being useful.

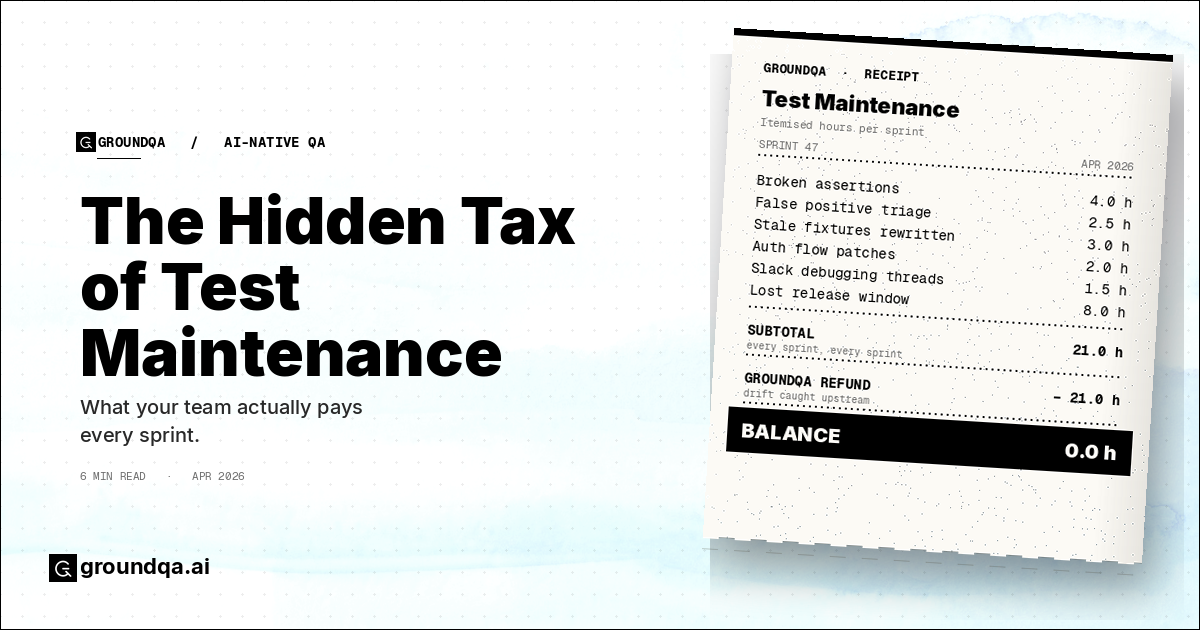

When API teams get into trouble with QA, the cause is rarely a missing test. The cause is that the tests they already have stopped matching reality, and nobody noticed in time. This is the hidden tax of modern QA: writing tests is the easy part, but keeping them honest is where the cost actually lives. Every schema change, every auth update, every shift in a downstream integration quietly raises the price of trusting your own suite. Teams feel that tax every sprint, even when the dashboard is still a comforting wall of green.

Here is the pattern that shows up again and again. A team adopts an AI-powered tool, generates a few hundred tests in an afternoon, ships faster for two sprints, and then around month three the same engineers are spending a day a week chasing assertions that no longer reflect how the API behaves. The suite has grown. The trust has shrunk. Nobody talks about it because everyone is busy.

If the only thing AI brings to QA is faster generation, it has not solved the problem. It has accelerated the rate at which you accumulate maintenance debt, and made the resulting suite harder to audit because nobody hand-wrote any of it.

The Real Problem Is Keeping Tests Alive

Most teams still evaluate test tooling as if authoring is the hard part. Can we create tests from OpenAPI? Can we get to 300 tests in minutes instead of weeks? These are useful capabilities, but once a product starts moving quickly they stop being the bottleneck.

In a fast-moving API team, suites decay for predictable reasons. APIs evolve faster than test logic catches up. Producer-consumer relationships shift across services. Auth flows get more complicated. Test data drifts away from production, and "temporary" workarounds quietly become permanent fragility. Talk to any QA lead in a startup that ships daily and you will hear some version of the same story: a suite that was confidence-inspiring six months ago now needs a Slack thread to interpret every red build.

The result is a strange contradiction. More tests than ever before, and less confidence than ever before. Volume is no longer the constraint — drift is. And drift is expensive in ways most teams never measure cleanly. Hours rewriting broken assertions after a contract change. Time wasted chasing false positives. Releases delayed because nobody trusts the quality gate. Defects that escape pipelines which were technically green the whole time.

A small example makes this concrete. Imagine a payments team with around 400 API tests covering checkout. The product team ships a change to how refund metadata is stored — a single field rename in one downstream service. The next morning, 27 tests are red. Eighteen of them are real fallout from the rename. Nine are unrelated flakes that happened to surface the same day. A QA engineer spends most of the morning sorting which is which, and the deploy that was supposed to ship before lunch ships at five. Nothing in that story is unusual. That is just Tuesday, and it repeats roughly every week.

That is the tax. It compounds quietly, and shows up as slower releases, cautious teams, and a slow-burning skepticism toward automation itself.

Why Most "AI-Native" QA Is Really Just Faster Generation

A lot of products use the phrase AI-native to describe what is really one feature: automated test generation.

Generating tests with an LLM is a real capability, but it does not make a QA system intelligent. In most cases it shifts the same problem earlier in the workflow. You get faster generation of shallower tests. You get faster expansion of suites that lack business context. You get faster production of assertions that look plausible but barely hold up under scrutiny. What that gives you is an acceleration layer on top of the old QA model, not a new one.

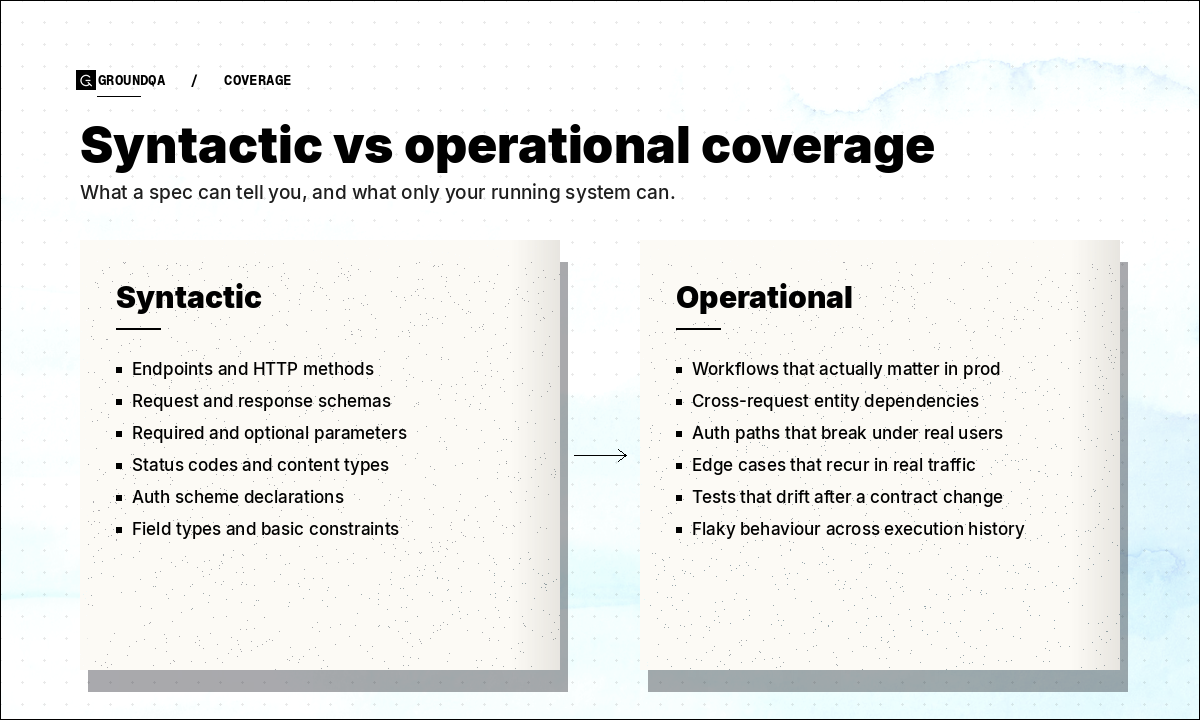

There is also a deeper issue. Generation that relies only on OpenAPI or Swagger produces tests that are structurally correct and operationally thin. The suite knows your endpoints, your parameters, your schemas, and your status codes. What it does not know is which workflows actually matter in production, which entities depend on each other across requests, which auth paths break under real user behavior, or which edge cases keep showing up disproportionately in real traffic. That is the difference between syntactic coverage and operational coverage. A spec can tell you what an endpoint accepts; it cannot tell you how your system behaves when something around it changes.

This is why generated tests without context tend to produce three bad outcomes:

- They over-cover the obvious. Lots of happy-path cases and schema validations make the suite look impressively large without making it noticeably more useful.

- They under-cover the meaningful. Real-world workflows, fragile dependencies, and the data relationships behind the most expensive regressions are exactly the things they tend to miss.

- They create false confidence. Because the tests are technically valid, the team assumes they are strategically valuable — and that assumption is where bugs slip through.

A QA system that earns the AI-native label has to do something more than draft test cases. It has to understand the system under test well enough to make testing better over time, not just faster on day one. In practice that means combining structural knowledge from API contracts, behavioral signal from real traffic, operational signal from execution history and flakiness, and human signal from the corrections QA engineers actually make. With that combination in place, a QA system can start answering the questions teams care about — what changed, what matters now, what should be tested next, and what should be retired — instead of just running whatever was written six months ago.

Why Pass Rate Is a Weak QA Metric

A team can sit at a 98% pass rate and still be dangerously under-tested. Pass rate measures how many tests passed; it tells you nothing about whether the right things were ever tested in the first place.

It says nothing about whether the suite reflects current production behavior, whether the assertions are meaningful or superficial, whether failures actually map to real business risk, or whether the suite has been quietly decaying for months.

Pass rate is comforting because it is simple, and that simplicity is exactly why it misleads. When your suite is full of stale or redundant cases, a high pass rate mostly means your suite is busy passing itself. That is theater dressed up as quality.

For modern API teams, more honest metrics include maintenance hours per release, change-adaptation time after API contract updates, coverage of real user flows rather than just endpoints, escaped defect rate by workflow, and the percentage of tests grounded in real traffic. These take more work to compute than pass rate, but they map much more directly to whether your QA system is getting better or just getting bigger.

What GroundQA Actually Does About It

This is the model GroundQA is built around. Instead of treating test generation as the product, the system treats QA itself as something that should keep learning.

In practice that looks like a few specific things. GroundQA ingests your OpenAPI specs and real API traffic together, builds a knowledge graph of how endpoints, entities, and workflows actually relate to each other, and uses that graph to generate tests that reflect real usage instead of just spec structure. When a contract changes, the system can tell you which existing tests are now stale, which are still valid, and which net-new cases the change implies — instead of leaving that triage to a human on a Tuesday morning. As QA engineers accept, reject, or rewrite suggestions, the system folds that judgment back in, so the next round of generation is more aligned with how your team thinks about quality, not how a generic LLM thinks about it.

The point is not that AI writes the first draft. The point is that the QA system gets better the longer you use it.

The Bottom Line

The hidden tax of modern QA has never really been the cost of writing tests. It is the cost of maintaining confidence in them once they exist. Generated tests alone do not get you there, pass rate does not get you there, and AI-native stops meaning anything useful the moment it collapses into AI-assisted.

The real shift is from static automation to adaptive QA intelligence — from suites that merely run, to systems that learn how your application actually behaves as a living thing rather than as a list of endpoints in isolation.

That is the bet behind GroundQA. If you are running a fast-moving API team and the maintenance tax is starting to show up in your release cadence, the product tour is the fastest way to see what this looks like in practice.

See GroundQA in action

A 5-minute walkthrough of how AI-native QA actually works.

Take the product tour →